|

I recently completed my Ph.D. from Wireless Information Network Lab (WINLAB) at Rutgers University where I worked under the guidance of Dr. Marco Gruteser, Dr. Narayan Mandayam and Dr. Kristin Dana. In Fall 2014, I will be starting as a post-doctoral research associate at Carnegie Mellon University under the mentor-ship of Prof. Peter Steenkiste (CMU) and in collaboration with Dr. Fan Bai (GM labs). Towards my dissertation thesis, I worked on a novel inter-disciplinary concept called visual MIMO that explores the use of cameras and other optical arrays as receivers in a communication system. My research work spans applications including car-2-car communication, sharing information with mobile phones and indoor positioning. During my internship at QualComm in the summer of 2013 I worked on a visible light communication application. My research interests lie in system design and prototyping, and includes research areas in visible light communication (VLC), mobile computing, vehicular networking, computer vision, augmented reality, wearable systems and localization. I love tinkering with hardware and building systems. |

My Citations My LinkedIn Research Projects Hobbyists Projects Publications

|

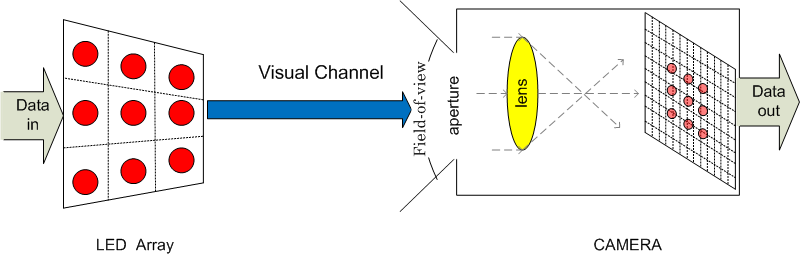

Visual Channel Models Visual channels are highly characterized by visual distortions, and unlike RF MIMO channels where multipath and fading are more significant. We model a visual MIMO channel subject to perspective distortions, artifacts due to lens-blur, spatial interference from multiple light emitters, and synchronization mismatch between the transmitter and the camera. We borrow from computer vision theory and also propose techniques that apply to camera-based communication channels in general. Links: Mobicom'10 [paper], [Rutgers Engineering Week Poster], CISS'11 [paper], ProCam'11 [paper] |

|

LED-to-Camera Communication for Car-2-Car Communication The inherent limitations in RF spectrum availability and susceptibility to interference make it difficult to meet the reliability required for automotive safety applications. Visual MIMO applied to vehicular communication proposes to reuse existing LED rear and headlights as transmitters and existing cameras (e.g. those used for parking assistance, rear-view cameras) as receivers. We designed a proof of concept prototype of a visual MIMO system consisting of an LED transmitter array and a high-speed camera. We also propose link layer techniques such as rate-adaptation that apply to such systems for adapting to visual channel distortions. Links: Mobisys'11 [paper][WINLAB Poster], SECON'11 [paper][slides] |

|

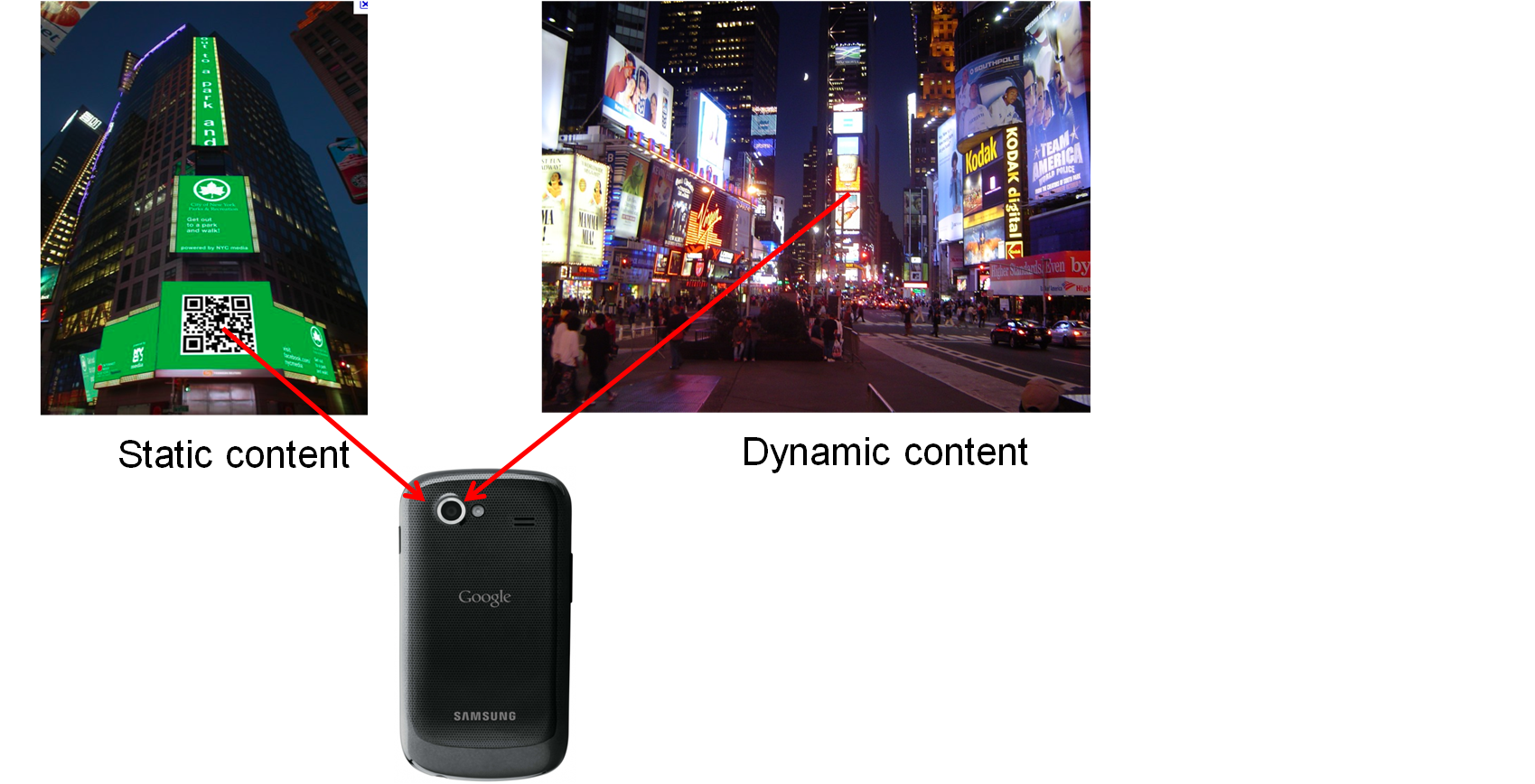

Display screens-to-Camera Communication The ubiquitous use of QR codes motivates to build novel camera communication applications where cameras can decode information from pervasive display screens such as billboards, TVs, monitor screens, etc. We are studying and exploring methods, inspired from communication and computer vision techniques, to communicate from display screens to off-the-shelf camera devices. Links: PerCom'14 [paper], WACV'12 [paper], CVPR'12 [poster], IEEE GlobalSIP [accepted manuscript] |

|

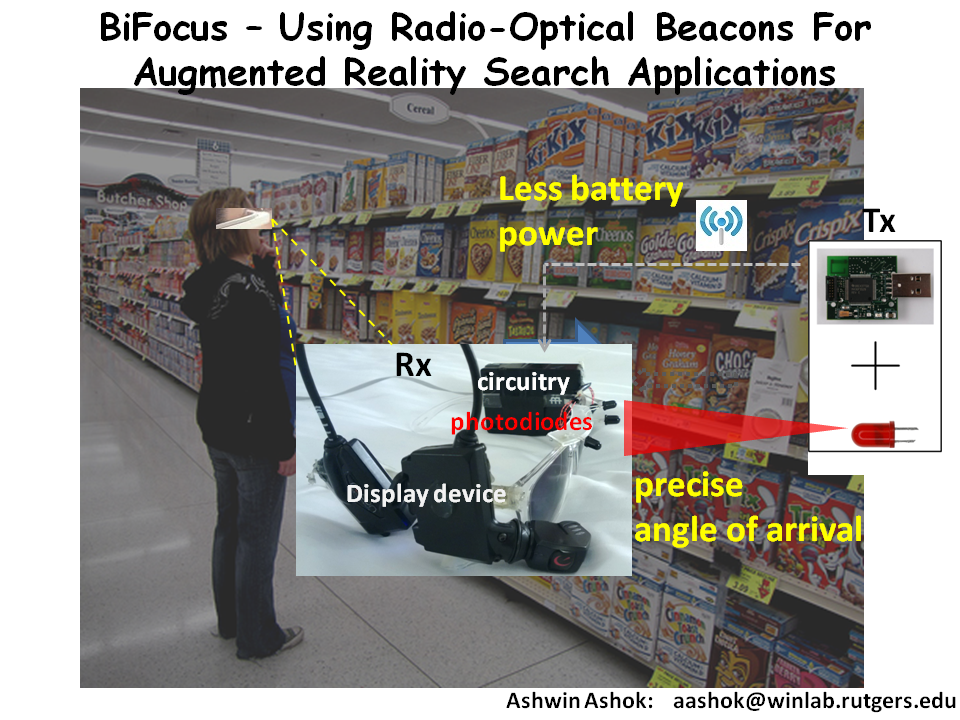

Fine-grained Positioning through Radio synchronized Optical link Estimating the position of nearby devices, accurately and with fine-resolution, is a hard problem, yet of importance to many context-aware applications; such as augmented reality, autonomous automotive systems, smart manufacturing systems, etc. We are exploring a technique that integrates wireless wearable devices with hardware adjuncts that can provide precise and highly accurate positioning of objects and people for indoor environments. Links: Mobisys'13 demo [paper][prelim video] |

|

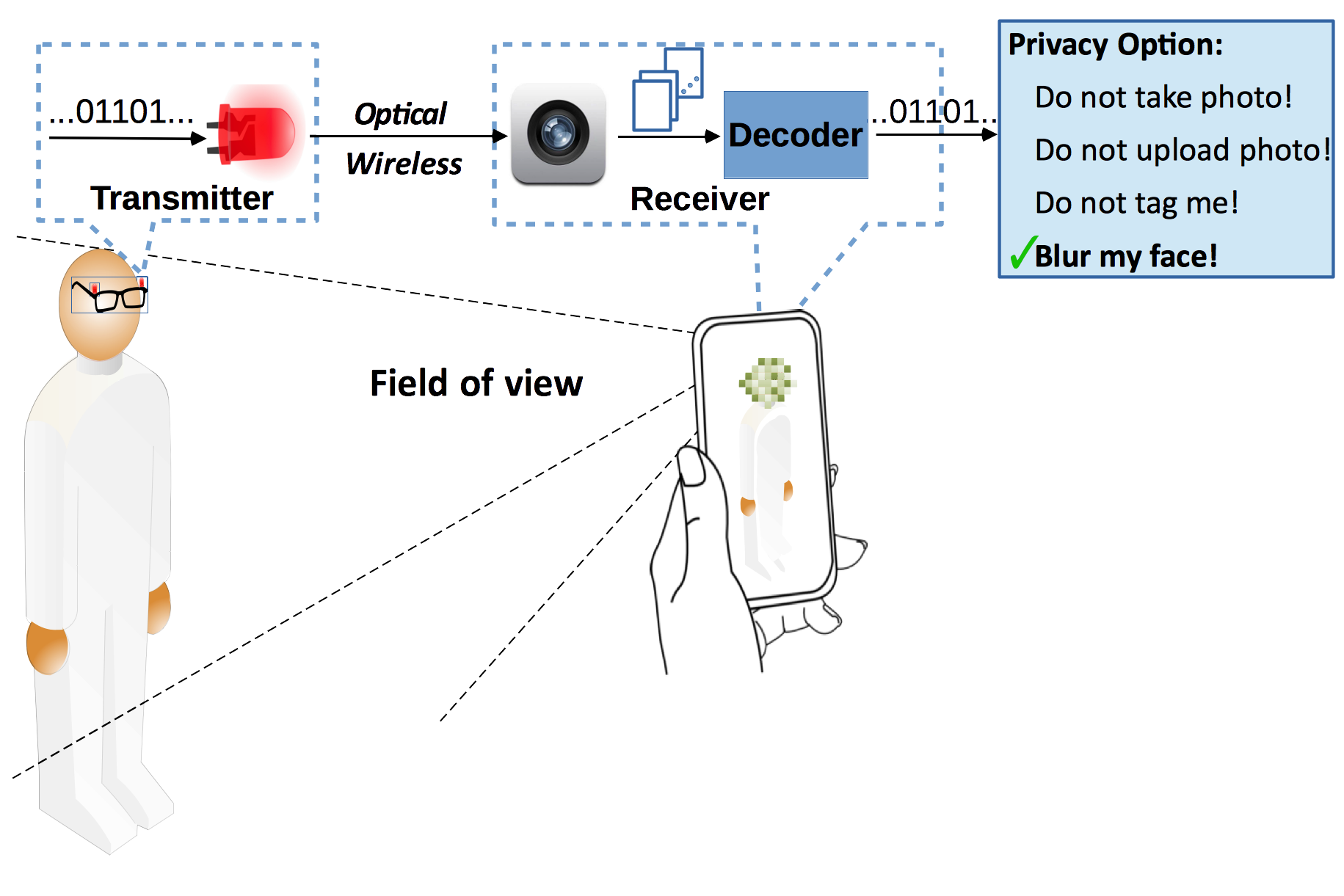

Privacy Respecting Cameras

The ubiquity of cameras in todayˇŻs world has played a key role in the growth of sensing technology and mobile computing.

However, on the other hand, it has also raised serious concerns about privacy of people who are photographed, intentionally or unintentionally.

We are exploring the use of near-visible/infrared light communication to design ˇ°invisible light beacons" where privacy preferences of

photographed users are communicated to cameras.

Particularly, we explore a design where the beacon transmitters are worn by users on their eye-wear and transmit a privacy code

through ON-OFF patterns of light beams from IR LEDs.

Links : VLCS/MobiCom'14 [paper]

Time-of-Flight camera based Communication

Time-of-Flight cameras or depth-sensing cameras like Microsoft's Kinect have become popular

as these cameras can find "how far is the object from the camera" or depth-sense.

We are exploring techniques to use such cameras for communication in lines of visual MIMO.

Links : ICCP'14 [paper]

| |

|

Arduino Shoes Designing a foot tracking device that can be embedded into a shoe and communicates with a smartphone through bluetooth. (more information upon request). |

NOTE: The material below is presented to allow timely dissemination of the work. Copyrights and all rights therein are retained by the copyright holders

A. Ashok, V. Nguyen, M. Gruteser, N. Mandayam, W. Yuan, and K. Dana, Do Not Share! Invisible Light Beacons for Signalling Preferences to Privacy-Respecting Cameras, in Proceedings of ACM MobiCom, VLCS Workshop, 2014 (accepted).

A. Ashok, S. Jain, M. Gruteser, N. Mandayam, W. Yuan, and K. Dana, Capacity of Pervasive Camera Based Communications Under Perspective Distortions, in PerCom: Proceedings of IEEE Pervasive Computing and Communications, 2014.

W.Yuan, K. Dana, R.Howard, A. Ashok, R. Raskar, M. Gruteser, and N. Mandayam, Phase Messaging Method for Time-of-flight Cameras, in ICCP: Proceedings of IEEE International Conference on Computational Photography, 2014.

A. Ashok, M. Gruteser, N. Mandayam, J. Silva, M. Varga, and K. Dana, Challenge: Mobile Optical Networks Through Visual MIMO, in MobiCom: Proceedings of the sixteenth annual international conference on Mobile computing and networking. New York, NY, USA: ACM, pp. 105-112, 2010.

A. Ashok, M. Gruteser, N. Mandayam, K. Dana, Characterizing Multiplexing and Diversity in Visual MIMO, Information Sciences and Systems (CISS), 2011 45th Annual Conference on, vol., no., pp. 1-6, 23-25 March 2011

A. Ashok, M. Gruteser, N. Mandayam, T. Kwon, W. Yuan, M. Varga, K. Dana, Rate Adaptation in Visual MIMO , Proceedings of IEEE Conference on Sensor, Mesh and Ad Hoc Communications and Networks (SECON), pp. 583-591, 2011

W. Yuan, K. Dana, M. Varga, A. Ashok, M. Gruteser, N. Mandayam,Computer Vision Methods for Visual MIMO Optical System, Proceedings of the IEEE International Workshop on Projector-Camera Systems (held with CVPR), pp. 37-43, 2011

M. Varga, A. Ashok, M. Gruteser, N. Mandayam, W. Yuan, K. Dana,Demo: Visual MIMO-based LED-Camera Communication Applied to Automobile Safety , Proceedings of ACM/USENIX International Conference on Mobile Systems, Applications, and Services (MobiSys), pp. 383-384, 2011

W. Yuan, K. Dana, A. Ashok, M. Varga, M. Gruteser, N. Mandayam, Dynamic and Invisible Messaging for Visual MIMO, Proceedings of the IEEE Workshop on the Applications of Computer Vision (WACV), pp. 345-352, 2012

W. Yuan, K. Dana, A. Ashok, M. Varga, M. Gruteser, N. Mandayam, Photometric Modeling for Active Scenes, IEEE CVPR Workshop on Computational Cameras and Displays, Poster Presentation, 2012

A. Ashok, C.Xu, T.Vu, M. Gruteser, Y.Zhang, R.Howard, N. Mandayam, W. Yuan, K. Dana,Demo: BiFocus - Using Radio-Optical Beacons for An Augmented Reality Search Application , Proceedings of ACM/USENIX International Conference on Mobile Systems, Applications, and Services (MobiSys), 2013

W. Yuan, K. Dana, A. Ashok, M. Gruteser, N. Mandayam, Spatially Varying Radiometric Calibration for Camera-Display Messaging, IEEE Global Conference on Signal and Image Processing (GlobalSIP) Symposium on Mobile Imaging, Dec 2013

T.Vu, A. Ashok SignetRing: Distinguishing Users and Devices using Capacitive Touch Communication , InterDigital Innovation Challenge (I2C) finalist, 2012 (available upon request)

Tam Vu, Ashwin Ashok, Akash Baid, Marco Gruteser, Richard Howard, Janne Lindqvist, Predrag Spasojevic, Jeffrey Walling Demo: User Identification and Authentication with Capacitive Touch Communication , Proceedings of ACM/USENIX International Conference on Mobile Systems, Applications, and Services (MobiSys), 2012

News!

Privacy Respecting Cameras work at VLCS/Mobicom Workshop

Our paper "Do Not Share! Invisible Light Beacons for Signalling Preferences to Privacy-Respecting Cameras" has been accepted at the 1st ACM Workshop on Visible Light Communications and Systems. This is a part of the MobiCom conference 2014.

Indoor Localization Competition

I will lead a team that will present a localization system that uses our hybrid radio-optical beaconing tags and receiver

Our Phase-Messaging array -- Time-of-Flight Camera based communication paper has been accepted to International Conference on Computational Photography

Our screen-camera communication capacity paper has been accepted to PerCom

Have been invited to serve as a TPC member of Mobisys'2014 Ph.D. forum

On 6th Nov 2013 i gave a talk at Stevens Institute of Technology ECE Seminar on Camera Based Communication Using Visual MIMO

Internship at Qualcomm

On 16th Aug 2013 I completed my summer internship at QualComm, NJ where i worked with Dr. Aleksandar Jovicic on a VLC application.

Talk at Qualcomm

Gave a talk on Camera based Optical Wireless at QualComm New Jersey Research Center in July 2013

Mobisys'2013 Demo

I presented a demo of our "Augmented Reality Glasses" (see BiFocus: Radio-Optical Beaconing for Augmented Reality Search Application ) at Mobile Systems and Applications Conference (Mobisys) at Taipei, Taiwan